This reproduction requires an hardware base with at least powerful as a Core2duo or a quadricore ARM on http://www.iginomanfre.it/x70_hevc_1200

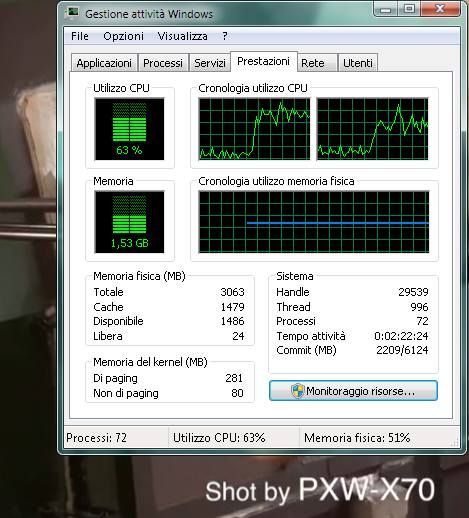

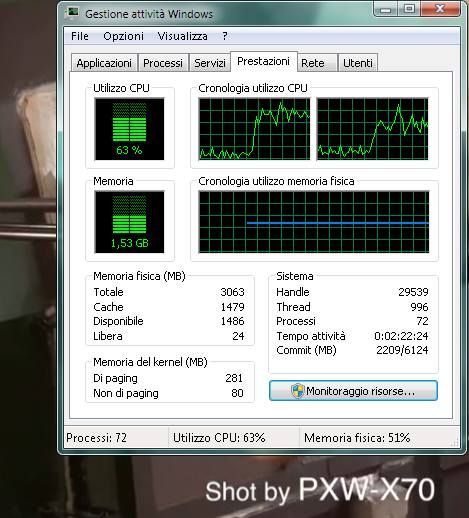

Before (CPU load at 15%) and after starting the 1.2 Mbps 720p24 stream reproduction on my core2duo laptop

JVET stays for Joint Video Exploration Team, we must become familiar with this acronym as we did with hevc or simpler h265 few years ago.

JVET, is the next chapter the ITU-T SG 16 WP 3 and ISO/IEC JTC 1/SC 29/WG 11 team is planning to squeeze video.

These webpages from ITU website and

from Fraunhofer are maybe more detailed.

The meetings of VCEG (Video Coding Expert Group) started in october 2015, aside the Geneva Lake, when most of us just hear something of hevc.

Now, as usual, there is reference a software for the JVET group, called JEM (Joint Exploration Model) which probably let us see the stars....

The page all meetings of the JVET document management system hold by University of Sud Paris lists the documents divided by the meetings related to JVET (the rest of the site - as the draft standard - seems quite in progress): but going back of about 30 years, how many would spent a cent for mpeg?

As it is written in a paper of July 2017 but diffused in the Macau meeting :

For many applications, compression efficiency is the most important property of a future video coding standard.

A substantial improvement in compression efficiency compared to HEVC Main Profile is required for the target application(s); at no point of the entire bit rate range shall it be worse than existing standard(s).

30% bitrate reduction for the same perceptual quality is sufficient for some important use-cases and may justify a future video coding standard.

Other use-cases may require higher bit-rate reductions such as 50%.

We all must stay Tuned!

About 20 years ago, video on the web was all but diffused as today: Youtube did not exist, but more important, to stream something it was required to produce at least three files compressed in three proprietary similar but mutually uncompatible compression schemes (quicktime, windows media and real video).

In these years we passed through flash, mpeg4, dynamic streaming, h264, DASH and last h265. Recently I've worked on the catalogue of TIM vision to address the contents to the many profiles to be compressed in h265 not exactly in cloud (it could be named how we can get all the inconveniences of cloud, as you can imagine, it's a long story, not to be dealt now).

h265 confirms an asthonishing compression scheme. It is possible to compress in HD at 1 Mbps. Compression artifacts almost disappears, but after the licensing scheme related problems (see below what I wrote about one year ago), there is a main question: the match between DASH and HEVC does not convince me a lot. In HEVC disappears the I frames but in order to break the file in chops for DASH needs, we must introduce artificial (not video related) break points (say every 5 seconds) to be able to switch in sync for bandwidth adaptation.

The DASH playlist file (officially .mpd) may contains 10 references to many audio and video resolutions. It means that you may pass from a 45 GB of source MXF file to 10 GB hevc file set. The providers of compression engines and storage are happy because they sell their services or systems on the pound, but I'm asking why do not apply chromatic and spatial resolution scalability?

To understand what is hevc scalability extension, read this article SHVC, the Scalable Extensions of HEVC,

and Its Applications from ZTE corp (Shenzen, PRC) website.

Practically with scalable extensions instead of many versions of the same content encoded at different bitrate and size, are produced one basic level and some enhancements that adds informations. These enhancements may be transmitted though a DASH approach.

Since you're in, read also this article about multilayering in HEVC, from the same source.

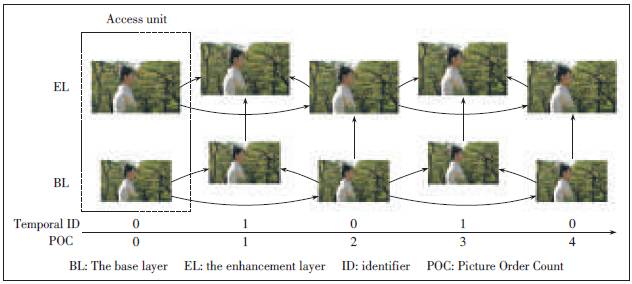

The above picture (from Multi-Layer Extension of the High Efficiency Video Coding (HEVC) Standard, same source of the former) sketches an example SHVC bitstream of spatial scalability with two layers, one base (BL) of lower resolution, and one (here, only one) enhancement layer (EL), a delta toward a higher resolution. You must consider that since in HEVC disappears the Group of Picture (GoP) concept as it was until h264 (so IBP frames do not exist anymore), any tile and/or slice in which the images sequence is decomposed has its own history and evolution. The sense is that: instead of switching between different resolution and bitrates files, the receiver get a base layer and many deltas.

It may be extremely interesting to read (and download) the HEVC licensing scheme from the HEVC advance, consider this website and take a look to the frequently updated licence section.

At the moment I do not know about real broadcasting test of HEVC, despite the marketing efforts. My biggest concern is related to the potential bandwidth of a transport stream broadcasted by air, cable or satellite.

The quality vs bitrate of hevc is really asthonishing, but its lack of GoP do not marry with conventional wave transmitting way such as transport stream or the DASH which segmentation is at the moment GoP prone (I mean: breaking in segment before an I-frame works better).

The test I'm conducting on (360p24 and 720p24 show a high sensitivity to the bandwidth characteristics: if your connection is really broadband you'll never experience any problem... otherwise...

In february 2015 the availability of open source HEVC codec (High Efficiency Video Compression, known also as h265) means it is ready to be used by all us.

To read/download the standard reach the ITU website and select the latest available version (as of writing, on may 23rd, the latest release of april 2015 was still not available). All the ITU standards are downloadable for free in pdf format. It is extremely valuable that standards are made public at no cost. For media industries the most known are h262 (mpeg2) and h264 (avc). Please consider how all the international bodies that sell the standards damage the interoperability and the progress of technology, forbidding an easy access the reference documents to the interested audience.

The more than 500 pages of the h265 standard may be too complex at first sight.

For basic informations you may read these Wikipedia articles about the h265 standard or its tiers and levels (the profiles and levels of former MPEG standards).

It is terrible, but after any technological risks HEVC might suffer, it seems that a number of lawyers are trying to assault this new train of technology before of its roll out

HEVC Advance produced in april last year a pricelist (officially Patent Pool pricing) updated in december in reaction of which initiative of april and december 2015 has been founded the Alliance for open media (a joint initiative of Amazon, Cisco, Google, Intel, Microsoft, Mozilla and Netflix) to create a new, royalty-free, open-source video compression format.

Last arrives the initiatives of MPEG LA (that literally killed MPEG4 in 2001) that later than other aggressive colleagues, define a specific new initiative).

What do they want to do? A proprietary open standard like VPn ?

Doesn't MPEG story teach anything to this people

At the moment I'm still trying to understand how HEVC is compatible with dynamic streaming (excluding a simpler profile enabling something like a GOP aligned segmentation) which would vanify a lot of compression gains. Even the naive way of streaming (what I'm using above) suffer of bandwidth fluctuation, but the quality gains from the algorithm.

Now these Lawyers-standards-killers (which performance we were already able to understand in 2001) could now kill even HEVC.

I hope not.

I remember in 1989 when the first MPEG1 standard papers comes out. A plenty of time passed by, but we must not forget it has been the first brick (even a stone: think to mp3 success!) that affected the technology of media as never before.

I was in. Maybe you could be interested to read A lot of passes have been done...

And today, being HEVC an hot subject, I've not been able to refrain me from writing down something:

embedded 360p24 HEVC embedded html page (february 2015)

It requires videolan VLC player version 2.2.0 (or greater) to be decoded. VLC is available in LGPL from videolan website.

Video embedding is not performed on smartphones for which browsers plug-ins have not been released (and probably will never done).

Smartphones - after installing VLC for android of iOS - may access to the stream http://www.iginomanfre.it/x70_hevc_300

embedded 720p24 HEVC embedded html page (february 2015)

This reproduction requires an hardware base with at least powerful as a Core2duo or a quadricore ARM on http://www.iginomanfre.it/x70_hevc_1200

Before (CPU load at 15%) and after starting the 1.2 Mbps 720p24 stream reproduction on my core2duo laptop

A couple of months after considerations (may 2015)

Further consideration of November 2015

Other things are coming, 4k (4:2:0) with source downloaded from the web... but the time to compress them on my small core2duo is terrible.

Not only, but in july 2015 VLC stopped to compress in hevc with x265 library.

No problem, I can use ffmpeg, the open source software which developed the library. But there are few problems about which I'm investigating, not only the speed.

The 1602 façade of Carlo Maderno is 114,69 wide by 45,44 meters tall (from wikipedia) so the pitch of the projection done at the jubilaeum opening night show has been 1.4 cm, one pixel every 1.4 centimeter

Consider that scanning an A4 sheet (21 by 29.7 cm) at 300 dpi (dot per inch, about 12 pixel per millimeter). That sheet landscape oriented is a 3K (3507 pixels).

Projecting the smallest "8k" screen (7680x4320) on a 4 mm pitch professional LED composable display (used in theatrical background) generate 30.72 wide by 17.28 meters tall image.

But at home, do we need of such sizes ?

The market continously requires us to buy wider and wider screens.

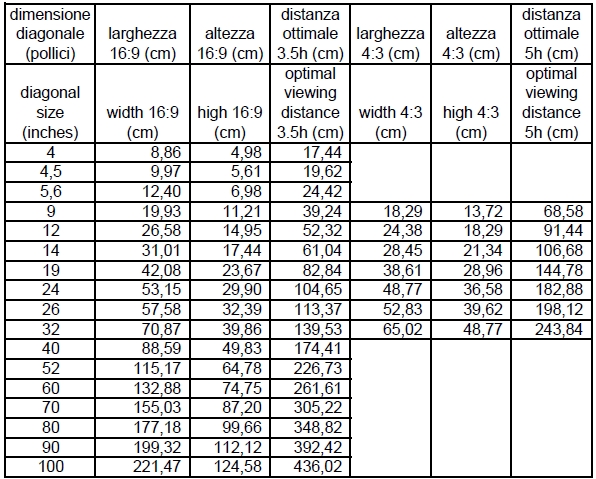

The following table shows the sizes in mm and the optical viewing distances of screens by diagolan inches sizes

A 100 inches screen hosting true 8k resolution of 2.21 by 1.24 meters, it means a resolution if about 36 dots per centimeter, 90 dot per inch, about 4 dots per millimeter.

Our visual acuity is about half degree: at 3.5 meter it is about few millemeters.

When in summer 2020 we went to buy a new flat screen tv (a 4k) the selling person told us this screen technology is much much poorer than the other [the nanoled tech]: it has a led every 8 pixel.

I made a quick count: about 2000 pixels on 80 cms, it means that you could notice luminosity gradient on squares of 4 mm. But if this is true for OLED where coloured light is emitted pixel per pixel (but OLED screen suffers other problems) and partially for nanoled lecnologies, the light Luminosity behind the LCD screen does not changes.

Consumism, the soul of commerce..

a lot of passes have been done (from early video compressions)